Why Reddit and YouTube matter for product research

If you’ve ever spent time researching a significant purchase, you’ve probably ended up on Reddit or YouTube. Not because you were specifically looking for them – but because they consistently surface more honest assessments than the first page of search results or the product’s own page. The reason is structural: Reddit’s voting system and moderation make large-scale fake review campaigns difficult. YouTube creators have audiences and reputations that create accountability for their assessments. Neither platform has the same financial incentives that make Amazon review manipulation so prevalent.

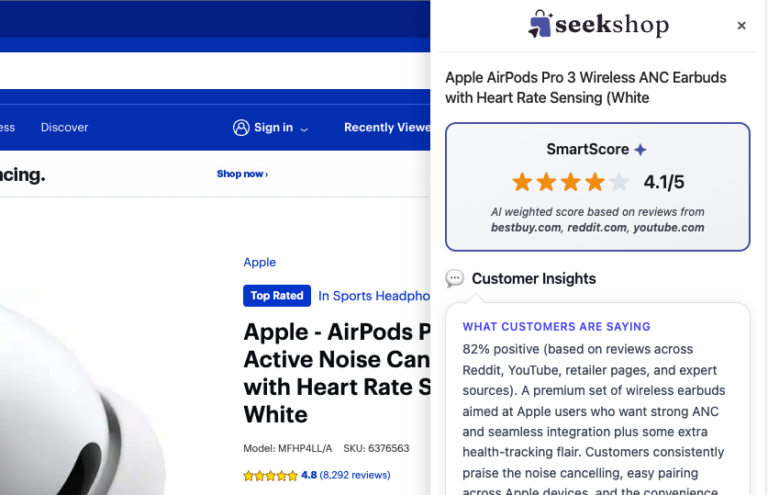

SeekShop’s SmartScore is built on the insight that Reddit and YouTube are the most valuable cross-check for consumer product decisions – and that aggregating them with Amazon and other retailer data produces a signal that’s substantially harder to manipulate than any single platform alone.

How SeekShop analyzes Reddit content

Reddit product discussions appear across hundreds of relevant communities – r/BuyItForLife, r/frugalmalefashion, r/HomeImprovement, category-specific subreddits, and general communities like r/AskReddit where product recommendations happen organically. The challenge with Reddit is that volume varies enormously by product and category, and raw sentiment analysis misses important context (a highly-upvoted negative comment has different weight than a buried positive one).

SeekShop’s Reddit analysis is vote-weighted – comments and posts that have received significant upvotes from the Reddit community carry more weight in the analysis than low-vote content. This matters because Reddit’s voting system is itself a quality filter: the community signals which opinions it finds credible, informative, or useful. We’re not just counting mentions; we’re aggregating community-validated sentiment.

Account tenure and reputation are also factored. A long-tenure Reddit account with a track record in relevant communities carries more weight than a new account. This helps filter out the rare case of coordinated Reddit campaigns – they tend to use newer accounts without established community standing.

We also weight recency appropriately. Products evolve – a product that received great reviews two years ago but has had quality control problems in recent production runs should reflect that in the SmartScore. Recent Reddit discussions get higher weight than older ones, with a smoothing factor that prevents a single bad batch of products from excessively distorting the longer-term signal.

How SeekShop analyzes YouTube content

YouTube product reviews are uniquely valuable because they provide long-form, hands-on analysis that text reviews can rarely match. A YouTube creator who unpacks a product and uses it for several weeks can surface failure modes, quality issues, and use-case limitations that a written review doesn’t capture. This depth of information is a meaningful input to product quality assessment.

The challenge with YouTube is creator quality variation. The platform has a spectrum from professional product reviewers with testing rigs and long-term assessment to unboxing channels that spend 2 minutes with a product and declare it excellent. We differentiate between these by weighting for creator signals: subscriber stability (channels that have maintained subscribers over time, not just grown them in a burst), engagement ratios (the ratio of comments and likes to views – an indicator of whether viewers found the content credible), and review depth (longer-form content that addresses multiple use cases and includes downsides is weighted more than brief positive impressions).

For specialist categories, we prioritize domain-relevant creators. A dentist’s YouTube channel reviewing electric toothbrushes carries more weight than a general unboxing channel. A chef reviewing kitchen knives carries more weight than a general consumer. Where creator expertise can be established, we apply it as a quality signal.

As with Reddit, we weight recency appropriately. A product review from 2021 is relevant as historical context but may not reflect the current production quality. Recent YouTube content is more heavily weighted, with awareness that very recent content may not have had time to assess long-term product performance.

How retailer data completes the picture

Beyond Amazon, Reddit, and YouTube, we aggregate review data from 1,000+ retailers. This breadth serves several purposes. It catches products that sell well in specific channels (specialty retailers, direct-to-consumer sites) and have strong niche reputations that general Amazon analysis would miss. It also provides a check on Amazon-specific manipulation – a product with thousands of suspicious Amazon reviews but strong performance on specialty retailer sites may be genuinely good and just heavily marketed on Amazon. The reverse (great Amazon reviews, poor specialty retailer performance) is a significant red flag.

Common misconceptions

More data doesn’t automatically mean better accuracy. We weight data by quality signals, not just volume. A single long-form YouTube review from a credible creator with detailed testing is worth more in the SmartScore than 20 brief Reddit comments from new accounts. Volume matters, but quality weighting matters more.

Reddit doesn’t have perfect signal. Reddit communities can have biases – brand enthusiast communities overrepresent loyal users; general communities overrepresent people who had problems (they were motivated to post). We account for community context in our analysis, but Reddit isn’t a perfect oracle.

SeekShop’s analysis isn’t real-time. We update SmartScores continuously, but there can be lag between new product data appearing and the SmartScore reflecting it. For very recently launched products, SmartScore data may be preliminary. We indicate data confidence levels alongside scores.

Practical takeaways

The most actionable implication of how SeekShop aggregates data: a high SmartScore requires consistent positive sentiment across Amazon, Reddit, YouTube, and retailer data simultaneously. Faking this across all four data streams is exponentially more difficult than faking a single platform’s rating. Products that score well on SeekShop have earned their reputation across multiple independent platforms.

Conversely, a low SmartScore despite a high Amazon rating is the signal that should immediately prompt additional research. It means the Amazon rating isn’t reflecting the cross-platform consensus – which is almost always because of manipulation, a specific Amazon reviewer demographic that differs from the broader population, or a quality change that Amazon reviews haven’t caught yet.

How SeekShop helps

The aggregation methodology described here is what powers the SmartScore you see when you use seekshop.co/review or the SeekShop Chrome extension. Each SmartScore represents a trust-weighted synthesis of hundreds to thousands of data points across Amazon, Reddit, YouTube, and 1,000+ retailers. It’s the research you’d want to do manually, automated and delivered in seconds.

Frequently asked questions

How many Reddit comments does SeekShop typically use for a SmartScore?

Volume varies significantly by product and category. Popular consumer products (major appliances, widely-discussed electronics) may have thousands of relevant Reddit mentions. Niche products may have dozens. SmartScore confidence is indicated alongside the score – lower-volume products show as less certain.

Does SeekShop analyze YouTube comments or just video content?

Primarily video content and metadata (title, description, channel signals). Comment sections are noisier and harder to quality-filter than the creator’s own content. For YouTube, we weight the creator’s assessment over comment section sentiment.

Does SeekShop have access to private or deleted Reddit posts?

No. SeekShop accesses publicly available Reddit content through Reddit’s API. Deleted or removed content is not included in the analysis.

How does SeekShop handle conflicting signals between platforms?

Platform discrepancies are surfaced explicitly in the SmartScore breakdown. When Reddit and Amazon diverge significantly, we flag this rather than averaging it away – a discrepancy is itself informative. The full SmartScore breakdown shows what each platform is saying, not just the composite number.

Bottom line

SeekShop’s Reddit and YouTube aggregation turns the research process that sophisticated shoppers do manually into an automated, weighted, and continuously-updated signal. The SmartScore reflects what the cross-platform consumer consensus says about a product – and that consensus is substantially harder to manipulate than any single platform’s rating.

Check any product at seekshop.co/review or install the SeekShop Chrome extension to get SmartScores as you browse.